Every enterprise has them. Systems built a decade ago - sometimes two decades ago - that still run the business. Monolithic platforms where critical business logic lives inside hundreds of stored procedures. Databases with sprawling schemas nobody fully understands. Security models built on assumptions that no longer hold. Workflow engines so rigid that a single exception forces users to restart an entire process from scratch.

These systems work. They also cannot scale, cannot adapt, and cannot support the pace of change the business demands.

Application modernization has always been hard. Not because the code is hard to rewrite, but because the knowledge buried inside these systems is hard to recover. Documentation is missing or outdated. The engineers who built the original systems have moved on. Business rules live in code paths nobody remembers writing, in database triggers nobody wants to touch, and in the institutional memory of employees who are one retirement away from taking it with them.

This is the real challenge. And it is exactly where agentic AI changes the equation.

When leadership teams evaluate modernization efforts, the conversation often starts with technology: What language should we rewrite in? Should we move to the cloud? Should we containerize? These are valid questions, but they are not the first questions.

The first question is: Do we actually understand what our current system does?

In our experience working on enterprise AI-native transformations, the answer is almost always “partially.” Teams understand the happy path. They know what the system is supposed to do. What they rarely have is a complete picture of the edge cases, the exception handling, the implicit business rules that have accumulated over years of patches, workarounds, and customer-specific customizations.

Consider a typical enterprise platform in a regulated industry - healthcare claims processing, financial services onboarding, supply chain management, or workforce compliance. The core workflows in these systems often span identity verification, document processing, regulatory compliance checks, multi-party integrations, and client-specific configuration rules. What appears on the surface as a single “process” is really dozens of interleaved concerns wired together through brittle, sequential logic that breaks if anything arrives out of order.

Or consider a SaaS platform that has accumulated years of client-specific customizations - dynamically generated database columns, per-tenant workflow variations, hardcoded module IDs scattered across configuration files. The system’s behavior is effectively different for every deployment. Nobody can describe the full matrix of configurations from memory.

This is not a system you modernize with a code translator. This is a system you modernize by first understanding it deeply, then redesigning it intentionally.

Traditional approaches to application modernization generally follow a predictable pattern. A consulting firm conducts a multi-month discovery phase, produces a thick requirements document, and then a development team begins a multi-year rewrite. By the time the new system is ready, the requirements have shifted, the legacy system has accumulated more changes, and the organization has spent millions with uncertain results.

The “lift and shift” alternative - moving the application to the cloud without changing its architecture - avoids the rewrite risk but also avoids the modernization benefits. You end up running the same rigid, poorly documented monolith in a more expensive hosting environment.

Even “strangler fig” approaches, where you incrementally replace legacy functionality behind a facade, struggle when the underlying system is too tightly coupled to decompose cleanly. If your database schema was never designed for modular access, if your business logic is spread across stored procedures, triggers, application code, and middleware, then finding clean boundaries to strangle around is itself a significant engineering challenge.

The common thread in all these approaches is that they underestimate the knowledge recovery problem. The code is the easy part. Understanding what the code actually does - across all configurations, all edge cases, all the undocumented integrations - is the hard part.

This is where agentic AI enters the picture - not as a magic solution, but as a fundamentally different capability for dealing with the knowledge problem.

Let us be precise about terminology. Generative AI - the kind most enterprises have been experimenting with over the past two years - is reactive and stateless. You give it a prompt, it produces an output. It is useful for individual tasks: explaining a code snippet, generating a test case, drafting documentation.

Agentic AI is different. It adds orchestration, memory, and goal-oriented behavior. An agentic system can plan multi-step tasks, invoke tools, iterate on results, maintain context across long-running workflows, and coordinate multiple specialized agents toward a shared objective. In modernization contexts, this distinction matters enormously. Generative AI supports tasks. Agentic AI supports processes.

Be critical when vendors promise high accuracy numbers as if that is the whole story. This is still generative AI in early 2026. Treat claims as marketing until you have seen working results in your own codebase, with your constraints, and your risk profile. Only believe what you can validate.

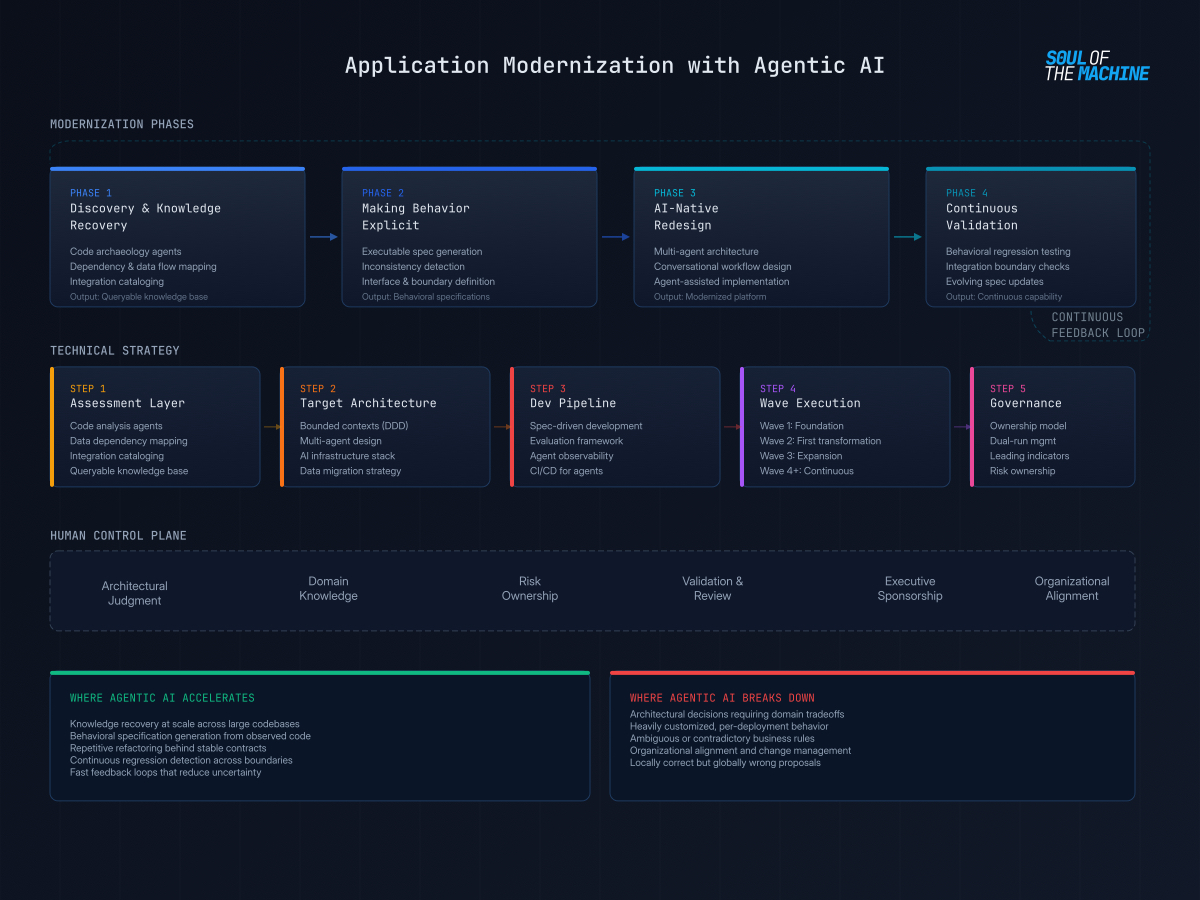

Here is how agentic AI plays out in practice across the phases of a modernization effort.

In early phases, agentic AI is most valuable as an exploration layer. Rather than relying on months of manual code archaeology, teams can deploy agents that traverse legacy codebases and build maps of execution paths, data access patterns, and implicit dependencies across modules.

An agent can correlate database access patterns, batch jobs, and integration code to surface where business rules are actually implemented - not where the documentation claims they are. In systems written in older languages or frameworks, agents can generate explanations of code behavior and reconstruct missing documentation, giving teams a shared starting point.

In one engagement, the legacy platform had accumulated hundreds of workflow configurations across different client deployments - each with subtle variations in field requirements, validation rules, and conditional logic. No single person on the team could explain the full matrix. An agentic discovery process mapped the variations, identified which configurations were actively used versus dormant, and produced a rationalized view that became the foundation for the redesign.

The output of this phase is not “truth.” It is a set of well-structured hypotheses that make further investigation faster and more focused. No production code is changed yet. The goal is to make complexity visible.

Once a baseline understanding exists, the next challenge is externalizing behavior that previously lived only in people’s heads or in fragile code paths.

Agentic AI helps here by proposing executable specifications and tests based on observed behavior in the code, identifying inconsistencies between similar logic implemented in different parts of the system, and helping teams define clearer interfaces and boundaries by surfacing what data and behavior actually cross them.

Consider a platform where document processing, identity verification, and compliance checking are all interleaved within a single workflow. The current system handles these as sequential steps in a monolithic process. An agentic analysis can identify the actual data dependencies between these steps, revealing which ones genuinely need to be sequential and which ones can be parallelized or decoupled. This is the kind of insight that transforms a modernization from “rebuild the same thing in a new language” to “redesign the architecture based on actual behavioral requirements.”

The value here is speed and coverage. Agents can iterate across areas of the system that human teams would not have the time or patience to inspect manually. The goal is not correctness on the first try, but fast feedback loops that reduce uncertainty.

This is where modernization diverges most sharply from traditional approaches. Instead of simply translating the legacy system into a modern stack, agentic AI enables teams to redesign the system as an AI-native architecture from the ground up.

What does AI-native mean in practice? It means the new system is designed around intelligent agents from the start - not as a monolithic application with AI bolted on as a feature.

For example, a legacy workflow system might present users with a rigid, multi-step linear process where every field is presented sequentially, data is re-entered multiple times, and any error forces a restart. An AI-native redesign replaces this with a multi-agent architecture: a conversational copilot that guides users through the process in an adaptive, non-linear flow. Specialized agents handle different concerns - one for contextual guidance, another for structured data collection, another for compliance-sensitive operations, and another for proactive error detection and resolution. The user experience is fundamentally transformed, not merely reskinned.

Agentic AI also accelerates the implementation itself. Agents can propose small, reviewable refactorings behind stable contracts, assist with framework and runtime upgrades where the transformation pattern is known, and generate repetitive changes across modules while preserving agreed-upon behavior. The agent acts less like an autonomous developer and more like a force multiplier for experienced engineers.

Legacy systems are rarely static, and neither is modernization. While you are rebuilding, the legacy system continues to evolve. New patches are applied. New configurations are added. New edge cases emerge.

Agentic approaches help by continuously validating behavior as changes are introduced, detecting regressions across integration boundaries, and updating specifications and tests as understanding improves over time. This shifts modernization from a one-off project to a continuous capability.

Intellectual honesty demands acknowledging the limits. Agentic AI struggles in specific, predictable ways.

It struggles when architectural decisions require deep domain understanding and tradeoffs that cannot be derived from code alone. Deciding whether to decompose a monolith into microservices versus a modular monolith, choosing between event-driven and request-driven integration patterns, determining the right level of multi-tenancy for a SaaS platform - these are judgment calls that require business context, organizational constraints, and experience with the consequences of different choices.

It struggles when business rules are ambiguous, contradictory, or historically grown. In legacy systems, it is common to find that the same business rule is implemented differently in different modules, that exception handling has become the rule, and that nobody can say with confidence which implementation is “correct.” Agents can surface these contradictions. They cannot resolve them.

It struggles when systems are so customized that generalized patterns no longer apply. Platforms with hundreds of client-specific configurations, dynamically generated database schemas, and deeply intertwined customization layers resist automated analysis because the system’s behavior is effectively different for every deployment.

In all these cases, agents can confidently propose changes that are locally correct but globally wrong. Without strong guardrails and human review, automation amplifies errors faster than it delivers value.

Even with perfect technical insight, application modernization rarely fails because of code alone. It fails because ownership is unclear, teams are fragmented, incentives favor stability over improvement, and touching a legacy system is often perceived as career risk rather than contribution.

Agentic AI does not fix organizational misalignment. It does not resolve political boundaries, unclear responsibilities, or the tension between “keep the system running” and “make it better.” What it can do is reduce the cost of understanding and experimentation enough that modernization becomes feasible within these constraints. The decision to act, accept risk, and prioritize change remains a human and organizational one.

For CIOs and AI leaders, this means modernization with agentic AI still requires executive sponsorship, clear ownership, and a willingness to invest in the foundational work before reaching for tools. The technology accelerates execution. It does not substitute for strategy.

The strategic question is not “should we use agentic AI for modernization?” but “how do we architect a modernization program that leverages agentic AI effectively?” Here is a technical strategy framework drawn from our work on enterprise-scale transformations.

Before any agent writes a line of code, you need a structured assessment of your legacy landscape. This is where agentic AI earns its first returns.

Deploy code analysis agents that scan your repositories and produce dependency graphs, call chain maps, and data flow diagrams. Tools like static analysis frameworks (SonarQube, Semgrep) can be orchestrated by agents that correlate findings across repositories, identify dead code paths, and flag modules with the highest cyclomatic complexity. The agent’s job is not just to run the tools but to synthesize the outputs into a navigable map of system behavior.

Map data dependencies systematically. Legacy databases are where modernization projects go to die. Have agents catalog table relationships, identify which stored procedures and triggers encode business logic versus infrastructure plumbing, and produce a data lineage map showing how information flows from ingestion through processing to reporting. Pay special attention to schemas that have grown organically - dynamic column generation, denormalized tables with hundreds of columns, and tables whose purpose nobody can explain. These are the landmines.

Catalog integration touchpoints. Most enterprise systems do not exist in isolation. They exchange data with ERP systems, payment processors, identity providers, compliance databases, and partner APIs. An agentic discovery process should map every external integration, document the protocol and data format, identify which integrations are actively used versus vestigial, and flag any that depend on deprecated APIs or undocumented endpoints.

The deliverable from this step is not a report. It is a queryable knowledge base - a structured representation of system understanding that agents and humans can both reference throughout the modernization effort.

With the assessment in hand, the next step is defining what you are building toward. This is where architectural judgment matters most - and where agentic AI is least useful as an autonomous decision-maker but most useful as a design accelerant.

Identify bounded contexts and service boundaries. Use the dependency and data flow maps from Step 1 to identify natural seams in the system. Where do data dependencies cluster? Where are the integration boundaries already implicit? Domain-driven design principles apply here: look for aggregates, bounded contexts, and anti-corruption layers that align with business capabilities rather than technical modules. Agents can propose candidate boundaries based on code analysis, but humans must validate them against business domain knowledge.

Design multi-agent architectures for complex workflows. For workflows that currently live inside monolithic process engines, consider decomposing them into coordinated agent systems. A supervisor agent manages the overall workflow state and routes to specialized sub-agents based on context. Each sub-agent handles a distinct concern - data collection, document processing, compliance validation, error resolution - with its own tools, knowledge bases, and guardrails. This pattern provides natural modularity, independent scalability, and the ability to evolve individual agents without redeploying the entire system.

Select the AI infrastructure stack intentionally. The choice of foundation model provider, vector database, orchestration framework, and observability tooling should be driven by your specific requirements. Key considerations include:

Plan the data migration strategy as a first-class workstream. Data migration is where modernization efforts accumulate the most risk. Define a strategy that addresses schema transformation (how legacy schemas map to the target data model), data validation (how you verify completeness and correctness after migration), dual-write periods (how long both systems need to operate in parallel), and rollback procedures (how you recover if migration introduces data integrity issues). Agents can assist with schema mapping and data validation rule generation, but the migration strategy itself requires human judgment about acceptable risk.

With the target architecture defined, the next step is establishing a development pipeline that leverages agentic AI throughout the implementation lifecycle.

Spec-driven development with AI collaboration. Rather than jumping straight to code, define specifications for each module or agent before implementation begins. A specification captures the intended behavior, inputs, outputs, constraints, and acceptance criteria. AI coding agents (Claude Code, GitHub Copilot, Cursor) are dramatically more effective when working against a well-defined spec than when interpreting ambiguous requirements. The spec becomes both the contract for the implementation and the basis for automated validation.

Establish an evaluation framework early. Agentic systems are non-deterministic. The same input may produce different outputs across invocations. This means traditional testing approaches - exact input/output matching - are insufficient. Build an evaluation framework that measures agent behavior across dimensions: task completion rate, accuracy of extracted information, compliance with business rules, latency, and cost per invocation. Use LLM-as-judge patterns for qualitative evaluation and deterministic checks for business-critical constraints. Run evaluations continuously, not just at release boundaries.

Implement observability from day one. Agentic systems are difficult to debug in production without comprehensive observability. Instrument every agent invocation with traces that capture the input, the model used, the tools invoked, the intermediate reasoning, and the final output. Use structured logging that supports correlation across multi-agent workflows. Tools like Langfuse, LangSmith, or cloud-native tracing solutions (AWS X-Ray, Azure Monitor) should be integrated into the agent infrastructure layer, not bolted on later.

Create a CI/CD pipeline for agents. Agents need the same deployment rigor as traditional software - versioned configurations, automated testing gates, staged rollouts, and rollback capabilities. Define agent configurations as code (prompts, tool definitions, guardrail rules, model selections) and manage them in version control. Automate the evaluation suite as a CI gate so that no agent changes reach production without passing behavioral validation.

Structure the modernization as a series of bounded waves, each delivering measurable value while building toward the full target architecture.

Wave 1 - Foundation: Stand up the AI infrastructure stack, implement the core agent framework, deploy the first agent (typically a read-only assistant that queries the legacy system without modifying it), and establish the evaluation and observability baseline.

Wave 2 - First workflow transformation: Select a high-impact, moderate-complexity workflow and implement the full agent-based replacement. This is your proof of concept at production scale. Measure everything - user experience improvements, processing time reduction, error rates, and operational cost.

Wave 3 - Expansion and integration: Extend the agent architecture to additional workflows, integrate with surrounding systems, implement the data migration for completed modules, and begin decommissioning legacy components behind the modernized front.

Wave 4 and beyond - Continuous modernization: Shift from project mode to product mode. The agent infrastructure becomes a platform capability that teams use to continuously evolve and improve the system. New workflows are designed agent-first. Legacy components are migrated incrementally as priorities dictate.

Modernization governance is where executive leadership earns its keep.

Define clear ownership for the modernized system. The most common failure mode is a modernization effort that produces a new system nobody feels responsible for. Assign product ownership to the modernized platform from day one, not after the migration is complete.

Manage the dual-run period explicitly. There will be a period where both the legacy and modernized systems operate in parallel. Define which system is the source of truth for each data domain, how conflicts are resolved, and what triggers the cutover.

Track leading indicators, not just lagging ones. Processing time reduction and error rate improvements are lagging indicators. Leading indicators include: number of legacy modules with agent-generated documentation, percentage of workflows with automated evaluation coverage, and mean time to deploy an agent configuration change. These tell you whether your modernization capability is improving, not just whether the current sprint delivered.

For CIOs evaluating modernization with agentic AI, here is how to sequence the first decisions.

Start with clarity on the “why.” Are you modernizing because of operational cost? Security and compliance pressure? Innovation bottlenecks? Scalability limits? Talent constraints? Each driver leads to different priorities and different success metrics. If you cannot articulate the business case clearly, the modernization will struggle to justify itself regardless of the technology.

Invest in discovery before committing to a path. Use agentic AI to accelerate the discovery phase, but do not skip it. Build a structured understanding of your current system’s architecture, data dependencies, integration landscape, and behavioral complexity. This investment pays for itself many times over by preventing costly surprises later.

Distinguish between migration and modernization. Moving a system to the cloud is not the same as redesigning it. Be explicit about which one you are doing and why. Many failed modernization efforts are actually migration projects that discovered mid-stream they needed to be modernization projects.

Consider AI-native redesign, not just translation. The most impactful modernization projects we have seen do not simply rewrite legacy systems in a modern stack. They reimagine the system around AI-native patterns - conversational interfaces, multi-agent architectures, intelligent document processing, and adaptive workflows. This requires more upfront design work, but it delivers transformative rather than incremental results.

Keep humans in the control plane. Agentic AI is a force multiplier for experienced teams, not a replacement. The most successful modernization efforts use agents for exploration, validation, and repetitive execution, while keeping architectural judgment, domain decisions, and risk ownership with people. This is not a limitation. It is the design.

Plan for continuous modernization. Legacy systems did not become legacy overnight. They accumulated complexity over years. The goal is not a one-time modernization event, but a continuous capability to understand, evolve, and improve systems over time. Agentic AI makes this sustainable in a way that was not previously feasible.

Application modernization with agentic AI is not about finding a shortcut around hard problems. It is about changing the economics of understanding, validating, and transforming complex systems.

The legacy systems that run your business contain decades of encoded knowledge - business rules, edge cases, domain logic, and institutional wisdom. Agentic AI makes it possible to recover that knowledge at a pace and scale that was previously impractical, and to use it as the foundation for genuinely transformative redesigns.

But the fundamentals have not changed. You still need clear business justification. You still need to understand your systems before you change them. You still need experienced engineers making judgment calls. You still need organizational commitment.

What has changed is the speed at which you can move through the hardest parts: understanding what you have, validating what you are building, and executing the thousands of incremental changes that turn a legacy platform into a modern one.

For CIOs and AI leaders ready to move beyond experimentation and into enterprise-grade modernization, agentic AI is not the destination. It is the capability that makes the journey possible.